Project scope

In this project, you will have the opportunity to develop and research on a state-of-the-art legged robot platform, Unitree Go2 and will result in autonomous, embodied intelligence demonstrated on the platform.

This project will require developing a software framework for navigation and control of the robot in simulation and execution. The student will be expected to use open source libraries and simulators to compose the framework. The scope of the research question is flexible, including but not limited to motion planning, imitation learning, reinforcement learning, Vision-Language-Action models, planning under uncertainty and intergrated learning and planning.

The robot

You can check out the coverage on this robot here.

Features and capabilities

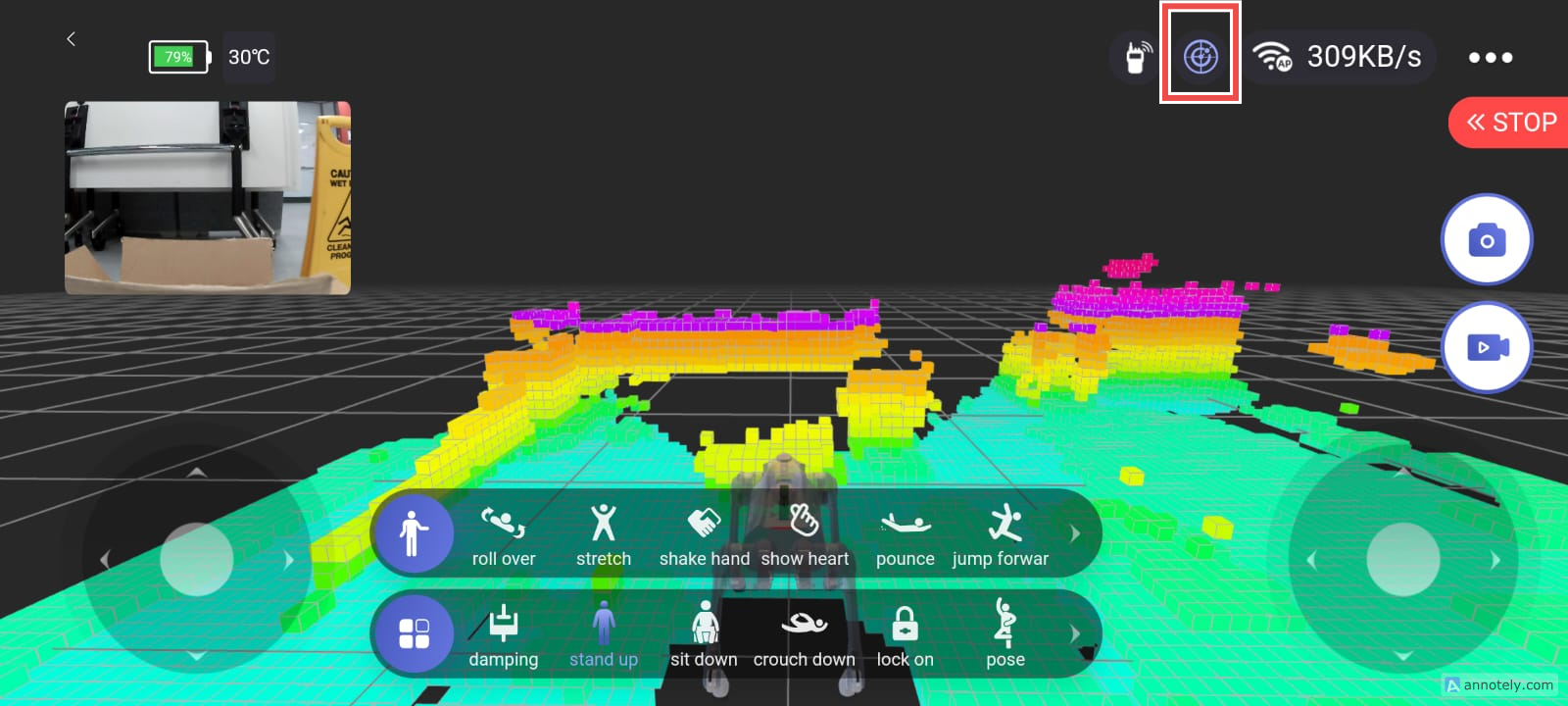

- RGBD camera

- LIDAR

- NVIDIA Jetson

- Mountable arm

Development guides

Prerequisite skills

- Proficiency in Python or C++

Recommended skills / technologies students can be exposed to

- Understanding of basic higher dimensional statistics (e.g., Multivariate Gaussian)

- Understanding of Bayesian Inference

- Understanding of basic motion planning algorithms (e.g., PRM, RRT)

- Experience with deep learning frameworks (e.g., PyTorch, Tensorflow, Jax)

- Experience with The Robot Operating System (ROS)

- Experience with simulators (e.g., Gazebo, Mujoco, Isaac Sim)