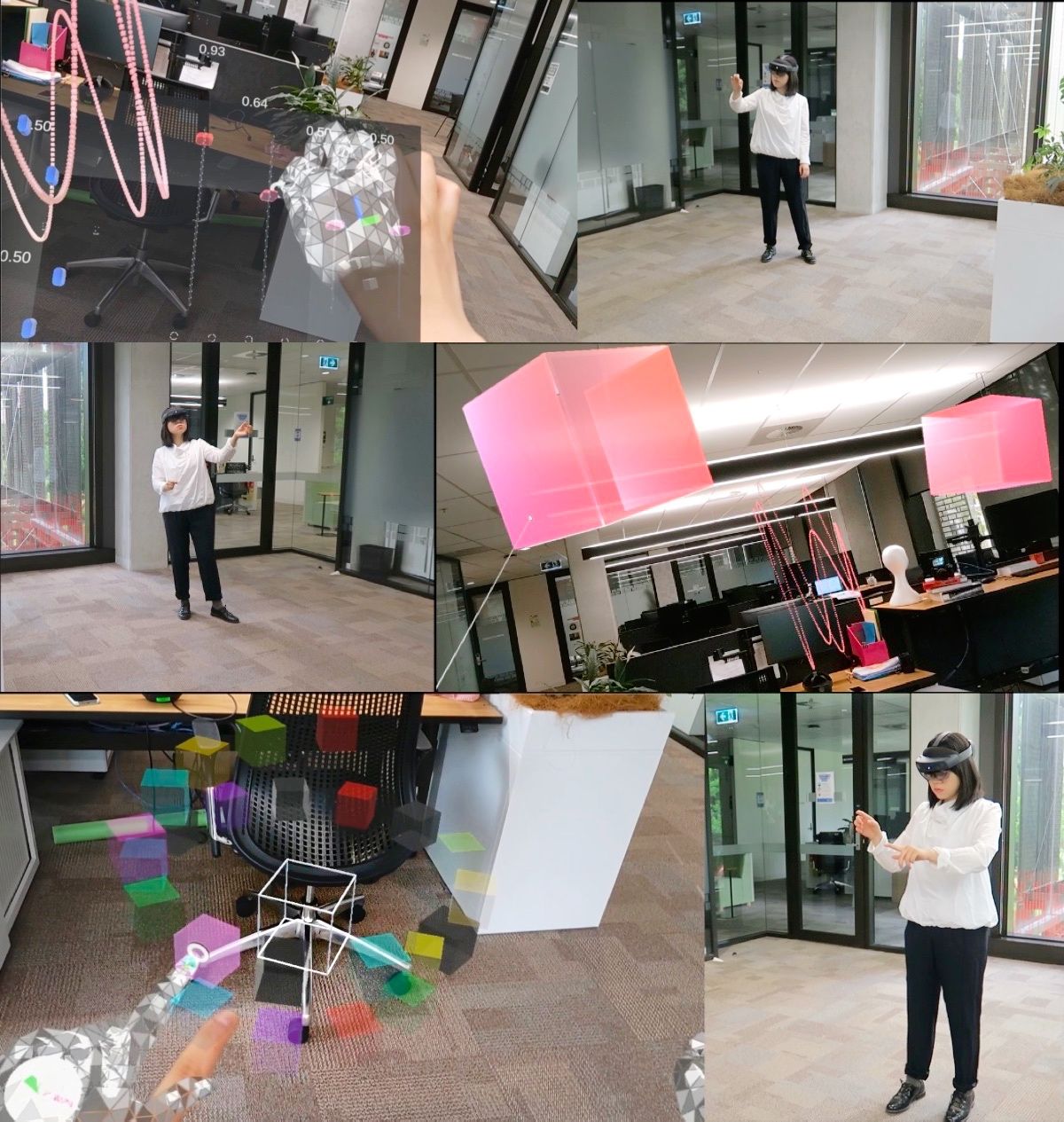

The idea of this project is to create intelligent musical instruments that can predict human musical interactions to help them create music. This work involves encapsulating machine learning models of creative interaction into a playable instrument that runs on an augmented reality platform such as Meta Quest.

The challenge here is to create a musically interesting way of guiding (rescuing?) AI music within a spatial computing interface.

As part of this project, you will conceptualise, create, and evaluate a musical system. You’ll need to be comfortable learning new languages and should enjoy working with physical hardware. It would be advantageous to have taken Sound and Music Computing and Human-Computer Interaction.

One focus of this project is to use augmented reality systems such as Meta Quest or others. You would use Unity or other 3D interactive software development tools to create your system.

You can take inspiration from some of our previous intelligent musical instruments that you can see here. In particular you should read about our work designing AR musical instruments.

For an Honours/master project we would expect you to create a working prototype that includes an ML model and enables interactive sound or music to be created. You would need to complete some type of formal evaluation. This project could also be the basis of a wider PhD project.

Please read information about joining the Sound, Music, and Creative Computing Lab before applying for this project.

How to Apply

To apply for this project, contact Charles Martin.

Include:

- your CV

- your unofficial transcript (if you are an ANU student)

- a brief statement (200 words) in your email explaining how you would approach this project

Make sure to specify the skills and accomplishments you have that would help you to complete this project.

Useful Papers and Resources:

- Sonic Sculpture: Activating Engagement with Head-Mounted Augmented Reality. Martin, Liu, Wang, He and Gardner. 2020.

- Cubing Sound: Designing a NIME for Head-mounted Augmented Reality. Wang and Martin. 2022.

- Mobility, Space and Sound Activate Expressive Musical Experience in Augmented Reality. Wang, Xi, Adcock, and Martin. 2023.

- Augmenting Sculpture with Immersive Sonification. Wang, Gardner, and Martin. 2022.

- Understanding Musical Predictions With an Embodied Interface for Musical Machine Learning

- Performing with a Generative Electronic Music Controller

- Vigliensoni et al. A small-data mindset for generative AI creative work